Why Lineage Matters in Regulated Industries

In banking, healthcare, insurance, and government, data lineage is not a nice-to-have — it is a regulatory requirement. Regulators such as the OCC, FDIC, BCBS 239, HIPAA, and GDPR mandate that organizations demonstrate exactly where data comes from, how it is transformed, and where it lands. When an auditor asks “How was this number in the quarterly risk report derived?”, the answer must trace back through every transformation, join, filter, and aggregation to the original source record.

For organizations that have built their data pipelines in Informatica PowerCenter, this lineage information exists — but it is deeply embedded in PowerCenter’s proprietary metadata layer. The critical question during migration is: how do you preserve this lineage chain when the underlying technology changes completely?

Losing lineage during migration is not just a technical problem — it is a compliance risk that can halt production deployments, trigger audit findings, and erode trust with regulators.

The stakes are highest in organizations subject to model risk management (SR 11-7), where every input to a financial model must be traceable to its source. A migration that breaks the lineage chain effectively orphans downstream models and reports, creating a governance gap that can take months to remediate.

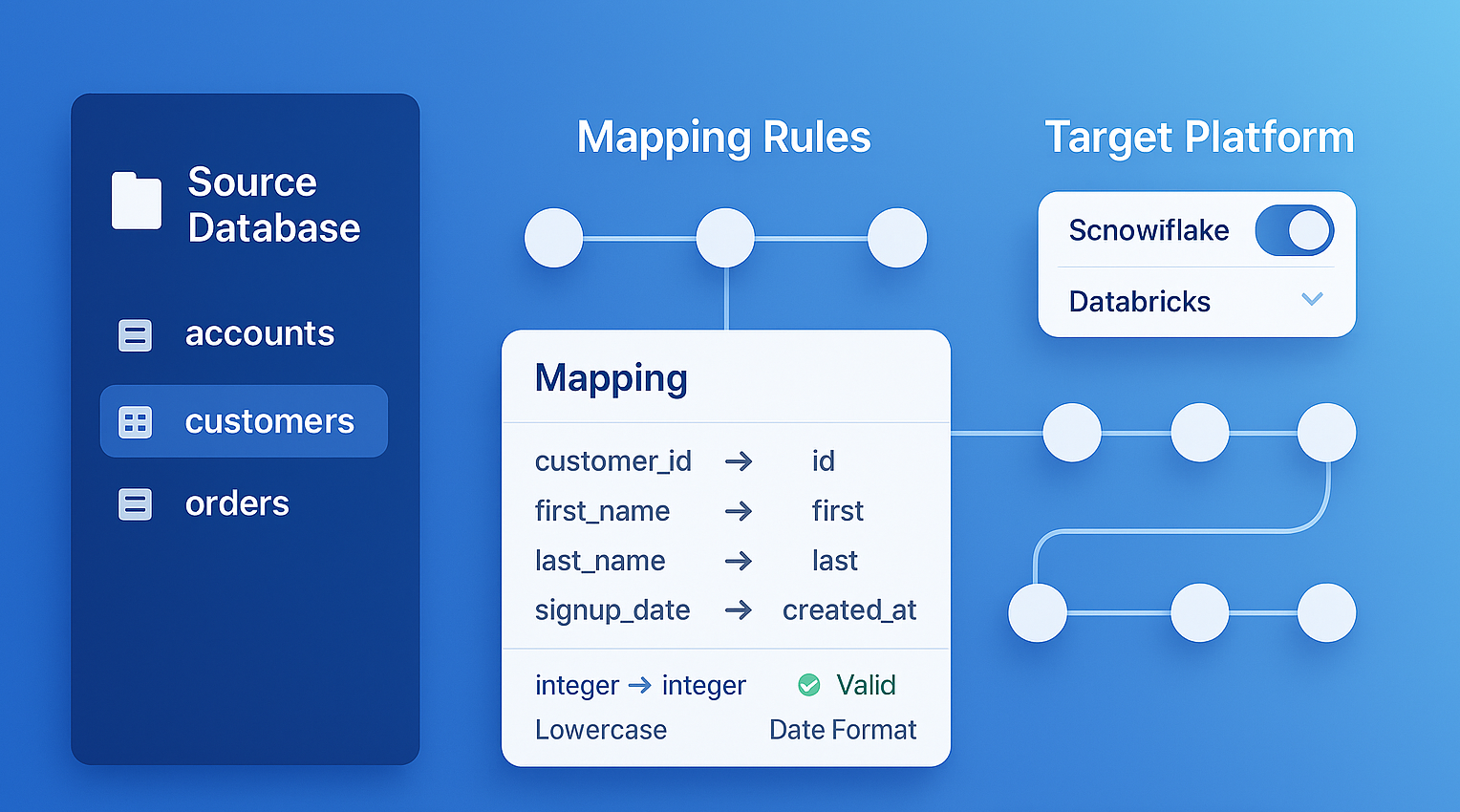

Informatica to MigryX Atlas migration — automated end-to-end by MigryX

The Lineage Problem: Proprietary Metadata Silos

Informatica PowerCenter stores metadata in several interconnected layers, none of which are designed for easy external consumption:

- Repository XML exports: The

pmreputility can export mappings, sessions, and workflows as XML files. These XML files contain the full definition of every transformation, port, expression, and connection — but the schema is proprietary, deeply nested, and version-dependent. - Repository database tables: The PowerCenter repository is backed by a relational database (Oracle, SQL Server, or Sybase) with hundreds of internal tables. Tables like

OPB_MAPPING,OPB_WIDGET,OPB_WIDGET_INST, andOPB_FIELDstore transformation metadata, but the relationships between them are complex and undocumented. - Session logs: Runtime execution logs capture actual data flows, row counts, and error details. These logs provide empirical lineage evidence but are stored as flat text files with no structured format.

- Data Analyzer & Metadata Manager: Informatica’s optional governance tools provide lineage visualization, but they query the same proprietary repository and do not export lineage in portable formats.

The net effect is that lineage in an Informatica environment is locked inside a proprietary ecosystem. When you decommission PowerCenter, you lose access to this lineage unless it has been explicitly extracted and preserved before the migration.

MigryX Atlas: Lineage That Goes Deeper

While most lineage tools stop at table-level tracking, MigryX Atlas traces every column through every transformation — joins, filters, aggregations, CASE statements, and derived calculations. It automatically generates Source-to-Target Mapping documents (STTMs) that auditors and business analysts can review without reading code. This is not just metadata scanning — it is deep semantic analysis powered by MigryX’s precision AST parsers.

Column-Level Lineage: The Gold Standard

There are different levels of lineage granularity. Table-level lineage tells you which source tables feed which target tables. Job-level lineage tells you which ETL jobs participate in a data flow. But for regulated environments, column-level lineage is the gold standard.

Column-level lineage traces the path of each individual column from source to target, documenting every transformation applied along the way. For example:

SOURCE: customers.first_name (VARCHAR) → Expression TX_CLEAN: UPPER(LTRIM(RTRIM(first_name))) → Lookup LKP_REGION: joined on customer_id → Router RTR_STATUS: group ACTIVE (status_code = 'A') → TARGET: dim_customer.customer_first_name (VARCHAR)

This level of detail is essential for several reasons:

- Audit trails: Regulators can trace any target column back to its source and understand every transformation applied.

- Impact analysis: When a source system changes a column definition, column-level lineage identifies every downstream target affected.

- Data quality root cause: When a data quality issue appears in a report, column-level lineage pinpoints exactly where the corruption or loss occurred.

- Migration validation: During migration, column-level lineage provides the specification against which the converted code must be validated.

How MigryX Extracts and Preserves Lineage

MigryX automatically traces column-level lineage through every transformation type in the PowerCenter mapping, producing comprehensive source-to-target documentation.

The final output is a Source-to-Target Mapping (STTM) document that captures the complete lineage for every column in every target table.

MigryX STTM Output Structure

Each STTM document generated by MigryX contains the following columns for every target field:

| STTM Column | Description |

|---|---|

| Target Column | The destination column name |

| Source Column | The originating source column(s) |

| Transformation Logic | Full expression chain from source to target |

| Business Rule | Human-readable description of the transformation intent |

The complete STTM document covers all governance requirements. Contact us for a sample.

MigryX generates comprehensive Source-to-Target Mappings (STTMs) automatically, eliminating weeks of manual documentation

Why Manual Lineage Documentation Fails — And How MigryX Fixes It

Enterprise data estates contain thousands of interdependent programs. Manual lineage documentation is outdated the moment it is written. MigryX Atlas continuously analyzes your codebase and produces lineage maps that reflect the actual state of your data pipelines — not what someone documented six months ago. Teams using MigryX Atlas report reducing impact analysis time from weeks to hours.

Lineage in the Target Platform

Extracting lineage from the source is only half the challenge. The converted code on the target platform must also preserve lineage annotations so that the lineage chain remains intact after migration.

MigryX embeds lineage metadata in the generated code and integrates with leading data catalog and orchestration tools.

The goal is not just to migrate the code — it is to migrate the governance. Every audit trail, every impact analysis capability, and every lineage visualization that existed in Informatica must have a counterpart in the target environment.

Governance Considerations: Compliance, Change Management, and Audit Readiness

A migration that preserves lineage technically but ignores the governance processes around it will still fail an audit. Organizations must address several governance dimensions:

Regulatory Compliance Documentation

Before decommissioning PowerCenter, produce a compliance package that includes: the complete STTM inventory, a mapping between old and new artifact names, parallel-run validation reports showing data equivalence, and sign-off records from data owners for each domain.

Change Management

Data stewards, business analysts, and compliance officers who are accustomed to navigating lineage in Informatica’s tools need training on the new lineage visualization tools. This is not purely technical — it requires updating runbooks, SOPs, and audit response procedures to reference the new platform.

Audit Readiness

Prepare for the first post-migration audit by assembling a “migration evidence package” that includes:

- Before-and-after STTM comparison: Demonstrating that the lineage chain is preserved across the migration boundary.

- Parallel-run validation reports: Showing row-level and column-level data equivalence between old and new pipelines.

- Code traceability matrix: Mapping each PowerCenter mapping to its equivalent target artifact, with links to both the source XML and the generated code.

- Approval records: Sign-offs from data domain owners, IT governance, and compliance confirming that the migration meets regulatory requirements.

Organizations that invest in lineage preservation upfront find that their first post-migration audit is smoother than expected. Those that treat lineage as an afterthought often face months of remediation work and audit findings that could have been avoided.

Key Takeaways

Data lineage is the connective tissue between your data pipelines and your governance framework. During an Informatica migration, preserving that tissue requires deliberate effort at every stage:

- Extract lineage before you migrate: Once PowerCenter is decommissioned, recovering lineage becomes exponentially harder.

- Demand column-level granularity: Table-level lineage is insufficient for regulated environments.

- Embed lineage in the target code: Lineage must live in the new platform, not just in a spreadsheet archived after migration.

- Integrate with your data catalog: Governance teams need lineage in their tools, not in a separate system they will never check.

- Prepare for audit day one: Build the evidence package as you migrate, not after.

A migration is not complete when the code runs. It is complete when the governance team can answer any auditor’s question about any column in any report — without referencing a decommissioned system.

Why MigryX Is Essential for Data Lineage

The challenges described throughout this article are exactly what MigryX was built to solve. Here is how MigryX transforms this process:

- Column-level precision: MigryX traces data from source field to target column through every transformation step, not just table-to-table connections.

- Automated STTM generation: Source-to-Target Mapping documents are produced automatically, saving weeks of manual effort per migration wave.

- Cross-platform support: MigryX Atlas handles lineage across SAS, Informatica, DataStage, Alteryx, SSIS, and 20+ other technologies in a single unified view.

- Regulatory compliance: SOC 2 compliant audit trails ensure every data flow is documented for regulatory review.

MigryX combines precision AST parsing with Merlin AI to deliver 99% accurate, production-ready migration — turning what used to be a multi-year manual effort into a streamlined, validated process. See it in action.

Ready to modernize your legacy code?

See how MigryX automates migration with precision, speed, and trust.

Schedule a Demo